Workshop 4: Normal Mapping

As a bonus we will add normal mapping to our lighting algorithm. Normal mapping, also

known as bump mapping, creates the illusion of small surface details that are not present in

the actual geometry. This is done by storing a normal vector in a secondary texture map. This

normal is used in the lighting algorithms instead of the vertex normal.

Because of the way normal mapping works, only the lightness of pixels is affected because

the lighting is dependant on the normal. The actual geometry is not altered. This can be

noticeable when looking at the outline of a mesh or when viewing a normal mapped surface

from an odd angle.

We will store the perturbed normals in a normal map. This is just a RGB image storing the x,

y and z component of the normal in the red, green and blue channel of the image. Normal

maps can be generated from a high-res mesh in high-end 3D modelling packages like 3D

Studio Max or drawn in Photoshop with the normal map plug-in.

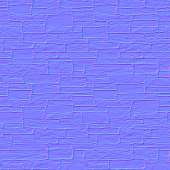

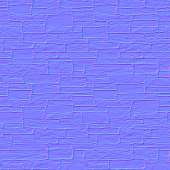

Here is a scaled down version of the normal map we will be using, generated in a few clicks

from the source image in Photoshop:

You can see the full size normal map in the model "Blob.mdl" in the normal mapping demo.

Because the RGB values in images only range from 0 to 1 (often represented as 0 to 255 in

image manipulation programs), the components of the normal vectors are "compressed" from the [-1..1] range into the

[0..1] range when the normalmap is generated. In the shader we can extract the original

values by multiplying them by 2 and then subtracting 1.

The normals in a normalmap are stored in the so called "tangent space" (also known as "texture space"). In the tangent space coordinate system, the W axis is the normal of the

surface, the U axis is the tangent that is passed from the engine and V is perpendicular to W and U.

Because the normals are stored in tangent space, we need to convert the light and view

direction vectors to tangent space as well to be able to compare these vectors. We calculate a

matrix to transform the vectors from world space to tangent space and use it in the vertex

shader to convert the view and light vectors to tangent space. These vectors are then passed to

the pixel shader where the perturbed normal is read from the normalmap. The perturbed

normal, the light vector and the view vector are then used in the lighting algorithms we have

discussed in the previous chapters.

Implementation

// Tweakables:

static const float AmbientIntensity = 1.0f; // The intensity of the ambient light.

static const float DiffuseIntensity = 1.0f; // The intensity of the diffuse light.

static const float SpecularIntensity = 1.0f; // The intensity of the specular light.

static const float SpecularPower = 8.0f; // The specular power. Used as 'glossyness' factor.

static const float4 SunColor = {0.9f, 0.9f, 0.5f, 1.0f}; // Color vector of the sunlight.

// Application fed data:

const float4x4 matWorldViewProj; // World*view*projection matrix.

const float4x4 matWorld; // World matrix.

const float4 vecAmbient; // Ambient color.

const float4 vecSunDir; // Sun light direction vector.

const float4 vecViewPos; // View position.

float3x3 matTangent; // hint for the engine to create tangents in TEXCOORD2

texture entSkin1;

sampler ColorMapSampler = sampler_state // Color map sampler.

{

Texture = <entSkin1>;

MipFilter = Linear; // required for mipmapping

};

texture entSkin2; // Normal map.

sampler NormalMapSampler = sampler_state // Normal map sampler.

{

Texture = <entSkin2>;

MipFilter = None; // a normal map usually has no mipmaps

};

// Vertex Shader:

void NormalMapVS( in float4 InPos: POSITION,

in float3 InNormal: NORMAL,

in float2 InTex: TEXCOORD0,

in float4 InTangent: TEXCOORD2,

out float4 OutPos: POSITION,

out float2 OutTex: TEXCOORD0,

out float3 OutViewDir: TEXCOORD1,

out float3 OutSunDir: TEXCOORD2)

{

// Transform the vertex from object space to clip space:

OutPos = mul(InPos, matWorldViewProj);

// Pass the texture coordinate to the pixel shader:

OutTex = InTex;

// Compute 3x3 matrix to transform from world space to tangent space:

matTangent[0] = mul(InTangent.xyz, matWorld);

matTangent[1] = mul(cross(InTangent.xyz, InNormal)*InTangent.w, matWorld);

matTangent[2] = mul(InNormal, matWorld);

// Calculate the view direction vector in tangent space:

OutViewDir = normalize(mul(matTangent, (vecViewPos - mul(InPos,matWorld))));

// Calculate the light direction vector in tangent space:

OutSunDir = normalize(mul(matTangent, -vecSunDir));

}

// Pixel Shader:

float4 NormalMapPS(

in float2 InTex: TEXCOORD0,

in float3 InViewDir: TEXCOORD1,

in float3 InSunDir: TEXCOORD2): COLOR

{

// Read the normal from the normal map and convert from [0..1] to [-1..1] range

float3 BumpNormal = tex2D(NormalMapSampler, InTex)*2 - 1;

// Calculate the ambient term:

float4 Ambient = AmbientIntensity * vecAmbient;

// Calculate the diffuse term:

float4 Diffuse = DiffuseIntensity * SunColor * saturate(dot(InSunDir, BumpNormal));

// Calculate the reflection vector:

float3 R = normalize(2 * dot(BumpNormal, InSunDir) * BumpNormal - InSunDir);

// Calculate the specular term:

InViewDir = normalize(InViewDir);

float Specular = pow(saturate(dot(R, InViewDir)), SpecularPower) * SpecularIntensity;

// Fetch the pixel color from the color map:

float4 Color = tex2D(ColorMapSampler, InTex);

// Calculate final color:

return (Ambient + Diffuse + Specular) * Color;

}

// Technique:

technique NormalMapTechnique

{

pass P0

{

VertexShader = compile vs_2_0 NormalMapVS();

PixelShader = compile ps_2_0 NormalMapPS();

}

}

To use the normal mapping shader we have to tell the engine that it needs to calculate tangents and send them to the vertex shader. We do this by defining a matTangent matrix in the shader. When the engine finds a global matrix of this name in the shader, it takes this as a hint to calculate tangents. More on what we're doing with that matrix follows in the next section.

We have added another texture (entSkin2, the second skin in MED) and sampler object for the

normal map.

We deactivated mipmapping on the normal map because automatic mipmap generators usually can't handle normal maps. The vertex shader calculates the world-to-tangent-space matrix and transforms the view.

// Vertex Shader:

void NormalMapVS( in float4 InPos: POSITION,

in float3 InNormal: NORMAL,

in float2 InTex: TEXCOORD0,

in float4 InTangent: TEXCOORD2,

out float4 OutPos: POSITION,

out float2 OutTex: TEXCOORD0,

out float3 OutViewDir: TEXCOORD1,

out float3 OutSunDir: TEXCOORD2)

{

// Transform the vertex from object space to clip space:

OutPos = mul(InPos, matWorldViewProj);

// Pass the texture coordinate to the pixel shader:

OutTex = InTex;

// Compute 3x3 matrix to transform from world space to tangent space:

matTangent[0] = mul(InTangent.xyz, matWorld);

matTangent[1] = mul(cross(InTangent.xyz, InNormal)*InTangent.w, matWorld);

matTangent[2] = mul(InNormal, matWorld);

// Calculate the view direction vector in tangent space:

OutViewDir = normalize(mul(matTangent, (vecViewPos - mul(InPos,matWorld))));

// Calculate the light direction vector in tangent space:

OutSunDir = normalize(mul(matTangent, -vecSunDir));

}

First we add another input vector called InTangent. We use the input semantic TEXCOORD2 because the engine sends the tangents through this register. We calculate a matrix for converting the view and light direction vectors to tangent space. Such a conversion matrix basically consists of 3 unit vectors that are perpendicular to each other and point in the directions of the axes of the new coordinate system. The first vector is the tangent that was passed by the engine, converted to world coordinates. Note that our tangent is a float4, a 4-dimensional vector, so we need the .xyz suffix to indicate that we only want to use its first 3 y, x, z components. The third vector is our normal that is always perpendicular to the surface and thus to our tangent. The second vector - the so-called binormal - is calculated from the cross product between the other two. A cross product of two vectors is guaranteed to be perpendicular to both - you'll find details in Appendix B. Because the cross product and its negative would be both perpendicular, we use the 4th component of the tangent vector - InTangent.w - to solve this handedness ambiguity and decide whether to invert the binormal or not. That's why we used a 4-dimensional vector for the tangent. The engine precalculates the handedness of the binormal and passes either -1 or 1 in the inTangent.w component.

There are two new output vectors called OutViewDir and OutSunDir where we put the tangent space view and light direction vectors.

// Pixel Shader:

float4 NormalMapPS(

in float2 InTex: TEXCOORD0,

in float3 InViewDir: TEXCOORD1,

in float3 InSunDir: TEXCOORD2): COLOR

{

// Read the normal from the normal map and convert from [0..1] to [-1..1] range

float3 BumpNormal = tex2D(NormalMapSampler, InTex)*2 - 1;

// Calculate the ambient term:

float4 Ambient = AmbientIntensity * vecAmbient;

// Calculate the diffuse term:

float4 Diffuse = DiffuseIntensity * SunColor * saturate(dot(InSunDir, BumpNormal));

// Calculate the reflection vector:

float3 R = normalize(2 * dot(BumpNormal, InSunDir) * BumpNormal - InSunDir);

// Calculate the specular term:

InViewDir = normalize(InViewDir);

float Specular = pow(saturate(dot(R, InViewDir)), SpecularPower) * SpecularIntensity;

// Fetch the pixel color from the color map:

float4 Color = tex2D(ColorMapSampler, InTex);

// Calculate final color:

return (Ambient + Diffuse + Specular) * Color;

}

The pixel shader is the same as for the specular shader, but this time we receive the view and light direction vectors from the vertex shader and we use the perturbed pixel normal. The normal is retrieved by reading from the normalmap at the given texture coordinates and

converting it from the [0..1] range to the [-1..1] range.

We use InViewDir on TEXCOORD1 and InSunDir on TEXCOORD2 to receive the tangent

space converted vectors from the vertex shaders.

The demo to run is normaldemo.c:

By using normal mapping we can simulate a lot of surface details that are not present in the actual geometry, which is significantly faster (both in art creation and rendering) than modelling all that detail. Notice that you can still recognize hard edges on the outline of the

object because the normal mapping does not affect the polygons.

Next: Postprocessing